VCF 9.1 introduces VCF Management Services - but what is it and why do I need it

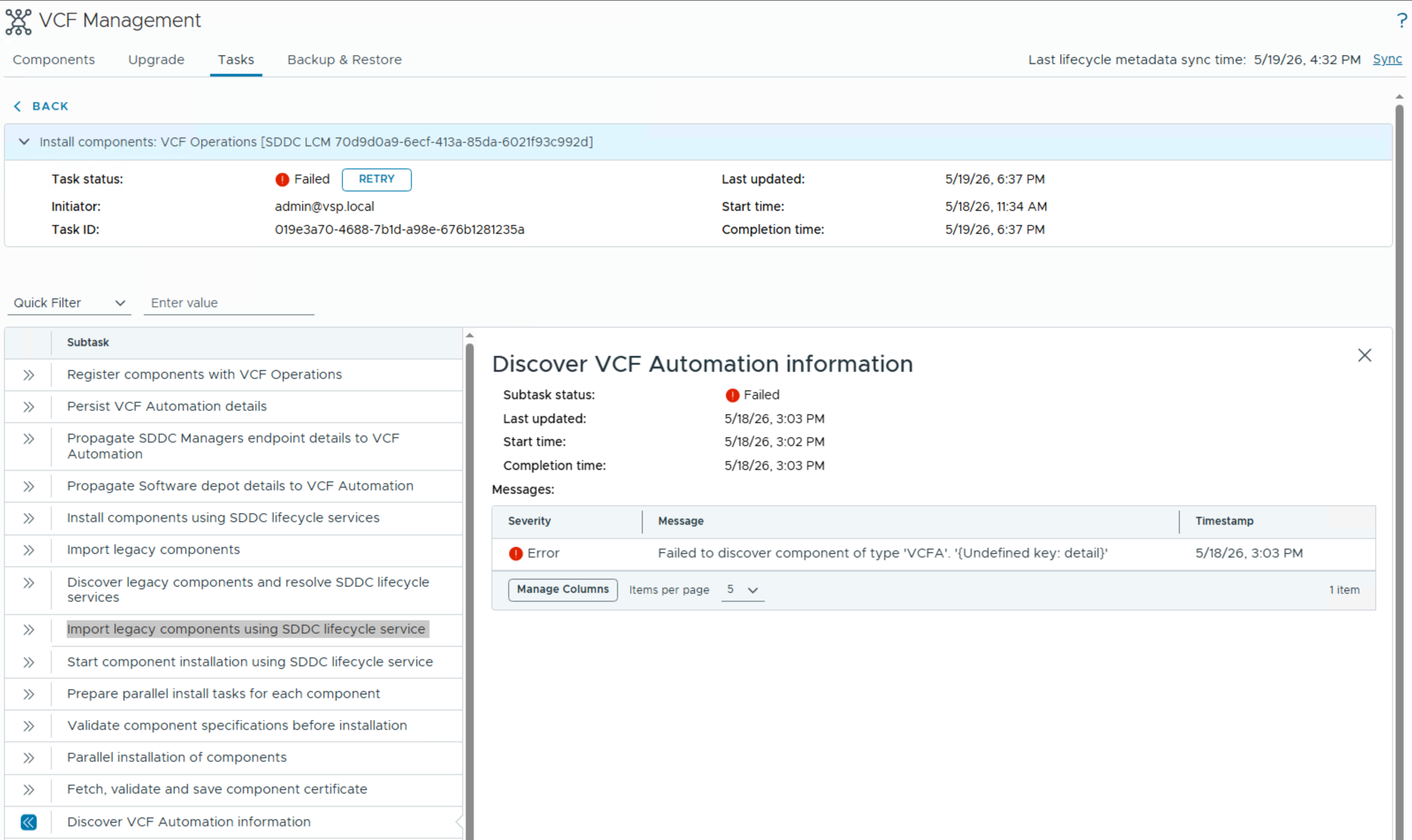

VMSP tasks fail with an error when integrating VCFA into the VMSP, stating undefined key

VMSP tasks fail due to Platform Health Check Error reporting that logging-operator-fluentd is in the wrong resource state InProgress

vSphere 8 U3 allows to retain vNUMA - but wasn't that the default anyway?

When you runtime EVC mode does not match your expectations

Announced in VMware Aria Operations 8.17.1 a new exciting feature was released to enhance notifications.

This is a now supported guide to adjust or modify the vSphere 8 cipher suites

What I learned from Routerlinks coming from the management API

Requalification after three years

Using the vSphere Automation RestAPI